tf.keras and TensorFlow: Batch Normalization to train deep neural networks faster | by Chris Rawles | Towards Data Science

tf.keras and TensorFlow: Batch Normalization to train deep neural networks faster | by Chris Rawles | Towards Data Science

François Chollet on Twitter: "10) Some layers, in particular the `BatchNormalization` layer and the `Dropout` layer, have different behaviors during training and inference. For such layers, it is standard practice to expose

![How to Solve] invalid argument: Nan in summary histogram for: image_pooling/ BatchNorm/moving_variance_1 | DebugAH How to Solve] invalid argument: Nan in summary histogram for: image_pooling/ BatchNorm/moving_variance_1 | DebugAH](https://debugah.com/wp-content/uploads/2021/06/91326d07ee8288a8a44f66fe6b492d88f7b.png)

How to Solve] invalid argument: Nan in summary histogram for: image_pooling/ BatchNorm/moving_variance_1 | DebugAH

tf.keras and TensorFlow: Batch Normalization to train deep neural networks faster | by Chris Rawles | Towards Data Science

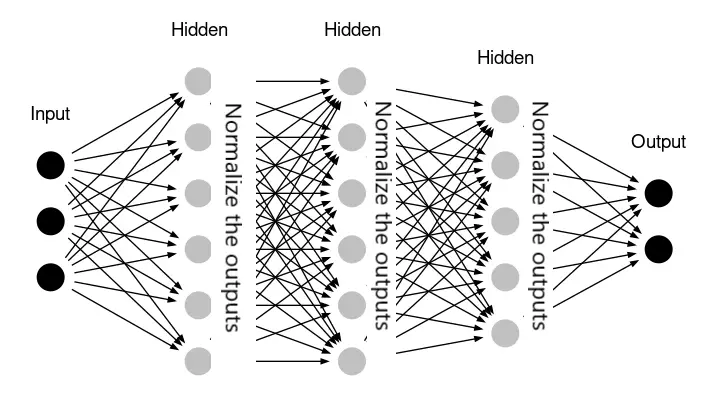

tf.keras and TensorFlow: Batch Normalization to train deep neural networks faster | by Chris Rawles | Towards Data Science

tf.keras and TensorFlow: Batch Normalization to train deep neural networks faster | by Chris Rawles | Towards Data Science

tf.keras and TensorFlow: Batch Normalization to train deep neural networks faster | by Chris Rawles | Towards Data Science

tf.keras and TensorFlow: Batch Normalization to train deep neural networks faster | by Chris Rawles | Towards Data Science

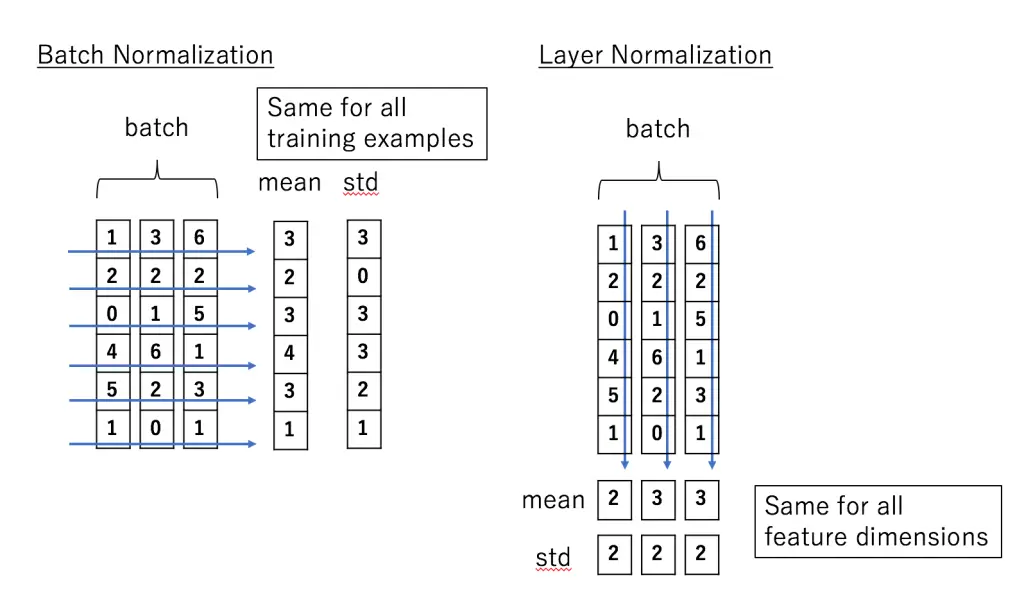

Keras Normalization Layers- Batch Normalization and Layer Normalization Explained for Beginners - MLK - Machine Learning Knowledge

tf.keras and TensorFlow: Batch Normalization to train deep neural networks faster | by Chris Rawles | Towards Data Science

tf.keras and TensorFlow: Batch Normalization to train deep neural networks faster | by Chris Rawles | Towards Data Science

tf.keras and TensorFlow: Batch Normalization to train deep neural networks faster | by Chris Rawles | Towards Data Science

![Solved] Python Loss of CNN in Keras becomes nan at some point of training - Code Redirect Solved] Python Loss of CNN in Keras becomes nan at some point of training - Code Redirect](https://i.stack.imgur.com/625eB.png)